One could easily go overboard in the design of all these new classifier and mapper functions, as the combinations of all the different parameters one can change are virtually endless. So it's a good idea to have a fast and simple way of measuring and comparing their performance so that we can keep just the ones that perform well in the GeneXproTools environment when we put them to work together with other functions.

So, in some kind of meta-analysis, I'm using GeneXproTools itself to decide which functions to add to the built-in math functions of GeneXproTools and which ones to reject! And what's more interesting, you don't have to have access to the source code to be able to do this kind of analysis: any one can do it using the Custom Math Functions of GeneXproTools!

But anyway, I chose the Iris dataset for this study because it's simple enough with just 3 classes (Iris Setosa, Iris Virginica and Iris Versicolor) and also because it's a well-balanced dataset with 50 records for each of the 3 classes. Indeed, you don't need very sophisticated classification algorithms to solve this problem successfully, but you do need a few simple tools to solve it in one go, such as the new 3-6 discrete output functions we've been talking about.

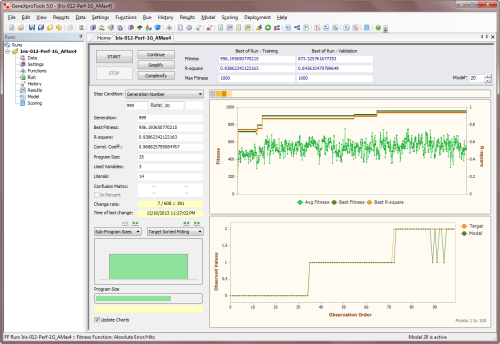

So the setup is very simple: I'm using a unigenic system with a single tree and a head size of 12. As for the other settings (population size, genetic operators, random numerical constants, etc.), I'm using the default values of GeneXproTools for Regression. For the function set I'm also using the Regression defaults, but I'm also adding the function under study weighted 20 times.

Then, using the Absolute Error/Hits fitness function with a Precision of zero, I perform 20 runs of 1000 generations each, where I keep just the last model of each run. Then I compute the average percentage of hits for each study by choosing the Hits favorite statistic in the History Panel. And this is the value that I'm giving you in the posts whenever I'm reporting how well a particular function performs and how it compares to the argmax function of 4 arguments, which as I said in the post "Function Design: Argmin & Argmax", will be used as my point of reference.

Comments

There are currently no comments on this article.

Comment

your_ip_is_blacklisted_by sbl.spamhaus.org